The General Election 2015 & The Polls – What Happened?

The general election result came as a surprise to most commentators, not least those in the opinion polling industry. As a result, the industry set up the BPC/MRS Inquiry into the Performance of the Opinion Polls at the 2015 General Election, chaired by Professor Patrick Sturgis, which is investigating why the final result was so different from most of the published voting polls in the final week of the campaign. What follows is Survation’s own report on what we think were the main causes of this discrepancy in relation to our polling, which has been submitted as evidence to the Inquiry.

Summary of the Polling

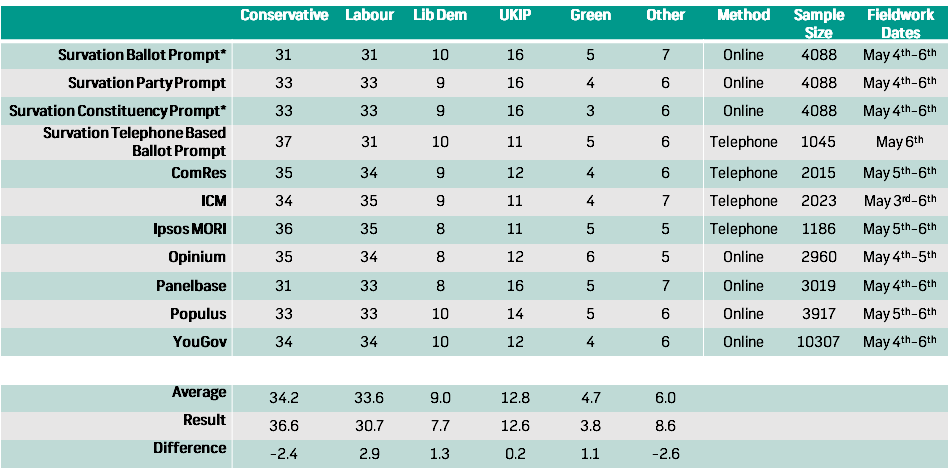

The table below shows the final voting intention polls from all companies, contrasted with the actual election result at the bottom. The top three rows reflect the results of the three different styles of voting intention question we asked in our final online poll for the Daily Mirror – a 4 party prompt, a 4 party prompt asking people to think about the candidates standing in their constituency and, at the top, the “ballot prompt” where candidates were all shown a replica of the exact ballot paper they would face in the polling booth, complete with all candidate names, party descriptions and logos shown in candidate alphabetical order.

As can be seen, all of these questions in our final online poll, as with all other BPC pollsters, failed to predict the UK vote share percentages for Labour and the Conservatives correctly.

Across all companies and modes, the average error was very similar, in particular regarding the gap between Labour and the Conservatives.

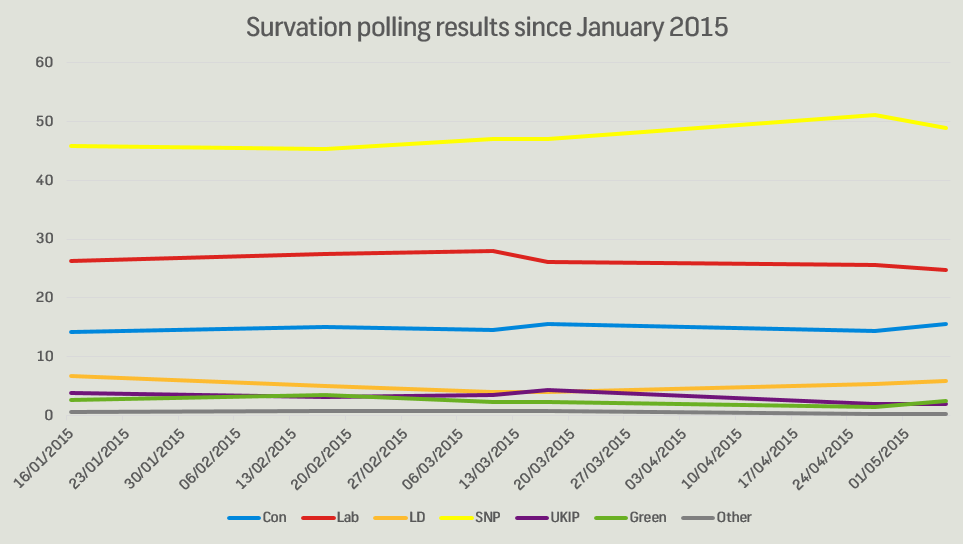

There were, however, some parts of the election that these final polls did predict well. Most companies had vote shares for the Liberal Democrat, UKIP and Green parties pretty close to what they ended up with. Meanwhile in Scotland, as shown by the chart below, Survation’s regular polls since January have consistently predicted results very close to the final results of SNP 50, Lab 24, Con 15. Survation’s final Scottish poll had results for all parties within 1.6 points of their eventual vote shares, well within margin of error.

This suggests that there was something fundamental about the nature of the race in England & Wales, particularly in relation to the Conservative-Labour gap, that led the national polling to fail to predict the outcome, rather than a wider flaw that would have made all polling useless.

One possible explanation, if we believe there may have been a late swing and differential turnout in England & Wales as a result of concerns about the SNP’s influence in a hung parliament, is that such concerns would not have existed for most in Scotland, who would by and large have been relatively unconcerned by a possible Labour-SNP deal post-election given that three quarters of the Scottish electorate voted for one or those parties.

Given that telephone and online polls all failed to predict the Conservative-Labour gap nationally, but both telephone and online polls have produced similar results in Scotland, it does not appear that there is a modal effect across the industry.

However, looking at the first table above, it can be seen that fieldwork dates may be significant – most final published polls began fieldwork three days or more before the election. The only exception is Survation’s telephone poll on the evening of May 6th. This poll – the only poll conducted entirely on the eve-of-election, was not published until the morning after the election due to being ready too late to carry in physical newspapers or broadcast and a concern at the time that the poll looked like a rogue outlier. Raw SPSS data for this poll were shared with BPC President Professor John Curtice at 7am on 8th May.

Survation Telephone Poll on 6th May: Method

Sample Frame Data

35,000 records prebalanced by age, sex, and region. Younger people (18-34) and 35-54, harder to reach groups, were over-represented in the sample with excess data. A combination of both landlines and mobile numbers were used, with mobile numbers prioritised. Not random digit dialling, but a random stratified sample of pre-known demographics.Date

The poll was conducted May 6th 2015 from 3pm to 9pm by phone. We had 31 callers calling between these hours from our in-house call centre.Method

The poll used the ballot prompt method, where callers confirmed the postcode and constituency of the respondent. The caller then referred to the list of candidates for the constituency, prompted in ballot order. The caller supervisor monitored the age, sex, and region targets throughout the fieldwork period. This enabled them to ensure any hard to reach groups were specifically included.Weighting

Data were weighted by age, sex, region, 2010 past vote, and likelihood to vote.

That said, the reasons for why this poll was not published are less important than what the poll itself shows. Conducted by telephone using a random stratified sample of lifestyle data, selected based on pre-known demographics in order to be nationally representative, this poll had a single voting intention question with all candidate names prompted in each respondent’s constituency in ballot order. This poll produced vote shares for Labour and Conservatives to within 0.6% of what they actually received.

This suggests a few things:

- It was not “impossible” for polls to accurately predict the election ahead of time, suggesting polling methods are not completely broken or without value. It should also be remembered that the exit poll which contradicted previous polling was itself a “poll” – supporting the inherent usefulness of polling as a practice even as it undermined the results of previously published polls.

- Survation’s ballot paper prompt question appears to have worked well when it was the only question asked, as here. However, where we asked it as the third in a series of voting questions (as in our online GB poll) it may have exaggerated the respondents’ propensity to pick a different party from their original choice, or even to pick a party at all if in fact they were undecided and likely in the event to not vote.

- The fact that the exit poll and the final Survation telephone poll saw the Conservatives doing better, whilst earlier published polling did not, suggests that people may have decided to act differently in the last 24 hours of the campaign, compared with how they had previously told pollsters they expected to.

Possibility of Late Swing?

This therefore raises suggestions that there might have been a “late swing” right at the end of the campaign (for these purposes, we include people deciding not to vote as a component of “late swing”, as we are exploring all changes in voting behaviour including the decision not to vote, though others have categorised this separately under “differential turnout”).

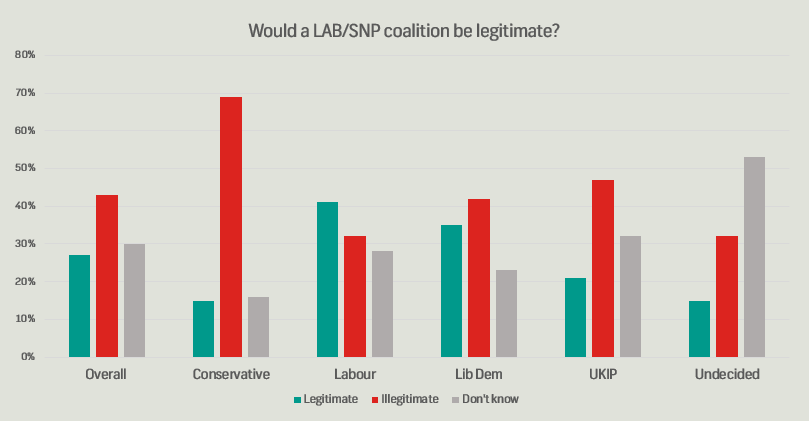

There are a couple of other reasons to think that this might have occurred. Firstly, a Survation poll for the Mail on Sunday the week before the election highlighted public concerns about the possible outcome of the election. With all indications pointing to a hung parliament at the time, the public were very concerned that the election would result in some form of Labour-SNP deal – something which, as the chart below shows, voters from all parties were concerned about. Well under half even of Labour voters UK-wide thought such an outcome would be “legitimate”, whilst among UKIP and the Liberal Democrats, concern was even higher.

Most people instinctively did not want a hung parliament despite being repeatedly told it was the outcome they should expect. 43% of the public in our pre-election Mail on Sunday poll went so far as to say there should be another general election in the case of a hung parliament, to try and produce a clearer result.

Might these concerns about the outcome they were heading towards have been enough to prompt voters to change their behaviour? For UKIP or Liberal Democrat supporters to “lend” their votes to the Conservatives in order to avoid this looming threat of supposed chaos?

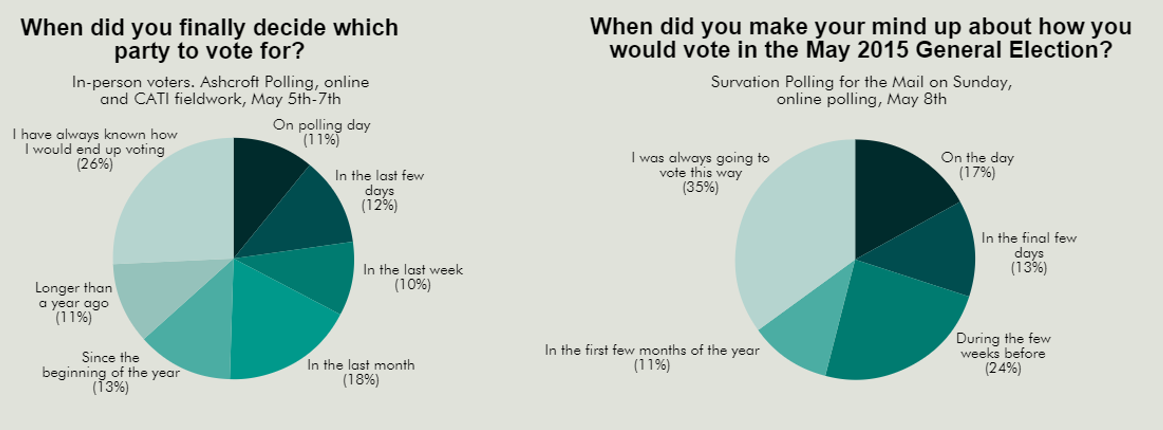

A second piece of evidence comes from the 8th May poll conducted by Survation for the Mail on Sunday directly after the election. 31% of voters told Survation they made up their mind who to vote for “in the final few days” of the campaign, including 17% who said they made up their minds “On the day” itself. These figures are similar to those found in an Ashcroft poll in which 33% said they made up their minds “in the last week” or less.

Recontact Study

To test this hypothesis in more depth, we conducted a recontact study of 1,755 of the respondents from the 4,000 who completed our final online poll for the Daily Mirror. They were recontacted online between 19th and 26th May.

Given the difference between the figures in the final poll for the Daily Mirror and the ultimate results of the election, we were interested to find how to what extent those people we interviewed on the Monday, Tuesday and Wednesday before the election had changed

their minds come polling day.

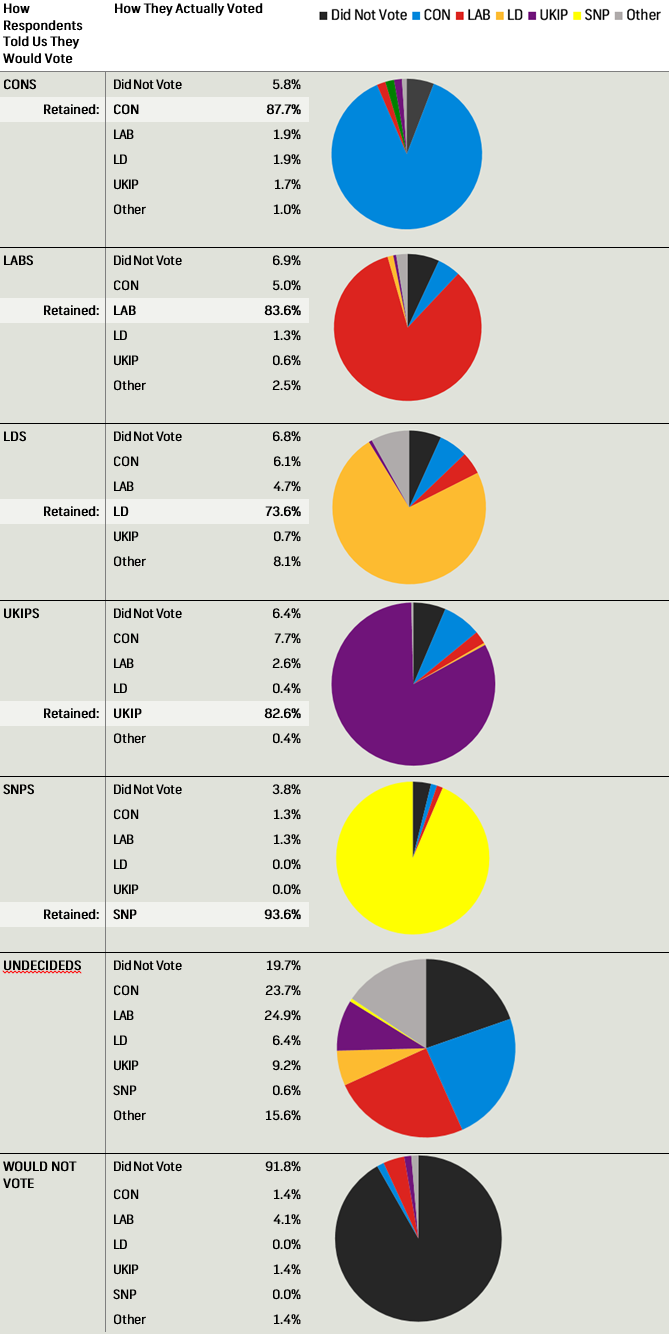

The results of this recontact work show that 15% of people who expressed a voting intention in our Daily Mirror poll conducted during the week of the election say they ended up behaving differently to how they had originally told us they intended to vote – either voting for a different party or not turning up to vote at all.

The four main types of behaviour that were observed were as follows:

- Labour voters not turning out and, to a lesser extent, switching to the Conservatives

- UKIP voters switching to the Conservatives

- Liberal Democrat voters switching to the Conservatives or not turning out

- Undecided voters splitting fairly equally between Labour and Conservatives

With the exception of the last of these four, all other shifts were to the net benefit of the Conservative Party. Conservative voters themselves turned out in high numbers and were very unlikely to change their mind (as were SNP voters). The total effect of this was equivalent to a 1.4% swing from Labour to the Conservatives – not as much as actually shown in the election, but accounting for a significant proportion of the difference between the final Survation pre-election online poll and the election result.

The full breakdown of observed shifts are shown in the table below:

The net changes between when people were interviewed in our final online poll and how they report actually having voted are as follows:

Con: +2.2%

Lab: -0.4%

LibDem: -0.8%

UKIP: -0.7%

SNP: -0.3%

Others: +0.0%

Applying these swings to the published first voting intention in our final online poll gives the following results, which is what we would expect to have shown had our final online poll been conducted on election day using the same sample and method.

(Difference compared to actual election result shown in brackets)

Con: 35.1% (-2.7)

Lab: 32.3% (+1.1)

LibDem: 8.0% (-0.1)

UKIP: 15.2% (+2.3)

SNP: 4.7% (-0.2)

Other: 4.3% (-0.9)

As can be seen, this would have brought the final poll significantly closer to the actual result, but with some differences still unaccounted for, particularly the Conservative figure still being around 2.5 points too low and the UKIP figure still being around 2.5 point too high. Overall, this suggests “late swing” or changed behaviours account for 40% of the discrepancy observed between our final online poll and the actual results.

Analysis conducted by the British Election study and a very similar recontact study by TNS-BMRB referred to at the first public meeting of the Inquiry also lend some support to these conclusions.

Additional Factors

We now need to consider what could account for the remaining discrepancy. It is unlikely that any single factor was responsible but there are certain plausible hypotheses to suggest.

First we must consider the possibility that UKIP voters are generally over-represented, and Conservative voters under-represented, on online panels. Certainly online polls have consistently generated higher UKIP vote shares than telephone polls in recent years and Survation’s own final telephone poll on 6th May actually had UKIP two points too low, compared with our final online poll which showed them three points too high.

UKIP supporters were consistently down-weighted in Survation’s pre-election online polls, while Conservative voters were up-weighted, mainly via income weighting. In theory weighting should correct for any imbalances in panel composition, but in practice it appeared that it did not go far enough. Survation have identified some changes that can be made to our income weighting, taking more into account different household sizes, which would slightly help improve the degree to which more affluent respondents are up-weighted.

A possible explanation is that the political upheaval since 2010 meant that weighting to 2010 vote or historic party ID targets was not an effective proxy for political views in 2015, as it did not capture which 2010 Conservatives were still voting Conservative and which had now switched to UKIP, or whether there were enough of the different types of 2010 Liberal Democrat voters.

In theory, if this was a large cause of the problem, this should now be corrected for through weighting by 2015 vote, unless of course the next parliament sees as many dramatic and complex inter-party shifts in support as the last one.

Non-Voters and Turnout Modelling

The other factor that must be considered is non-voters. Despite expectations that turnout would exceed 70%, in fact it only saw a marginal one point rise on 2010 to 66%. Parties other than the Conservatives suffered disproportionately from lower than expected turnout, particularly the Liberal Democrats.

Survation have identified some changes we can make to the way we weight people who did not vote in the previous general election, both in terms of their rim weight and in terms of their likelihood to vote weight. Together with the changes to income weighting above, these measures would have increased the Conservative vote share by about one further percentage point.

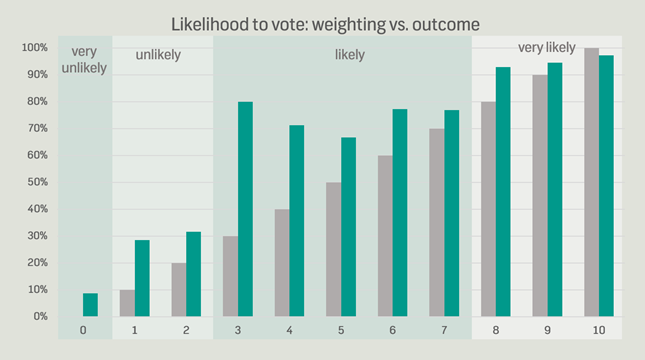

More broadly, there is a clear discrepancy between how likely respondents said they were to vote and how likely they actually were to later report having voted. The below chart shows the respondents’ expressed likelihood to vote (in grey) versus their propensity to later reporting having voted (in green).

Although some observations are based on small sample sizes (particularly middling numbers), we can clearly see that the correlation between expressed likelihood to vote and propensity to later report having voted was not linear.

Voters divide into four broad groups – those who are very likely to vote, those who have a high probability of voting, those who are unlikely to vote and those who say they will not vote. Within these groups the likelihood to report having voted does not vary significantly. At the same time, the proportion who reported having voted clearly exceeded actual turnout, suggesting that either panellists were exaggerating their voting activity (a possible danger in online panels where respondents over-claim to have done things to avoid a feared screen-out in which they are disqualified and not paid for their time), or else that our raw online sample was significantly short of non-voters (something already mentioned above and which we hope to correct for by changing our weighting to properly weight up less politically active respondents). From all this analysis we are reviewing how we weight likelihood to vote in future.

Summary of Conclusions

Clearly, such large discrepancies in the polls are unlikely to be explained exclusively by one factor alone. However, our research suggests that the late-swing of voters to the Conservatives, and the low turnout amongst those intending to vote for other parties, accounts for approximately 40% of the total gap – the largest single factor.

Other potential contributing factors that we can identify include weighting effects outlined above, in particular the way in which non-voters are included in our rim weight and the way in which we weight by income, which has previously not properly reflected the number of high income households in Britain. The ineffectiveness of 2010 vote weighting / party ID weighting has also been discussed, along with the hope that this should (at least for the time being) be improved with using 2015 vote weighting. These weighting factors could account for another 20-30% of the total discrepancy between our final online polling and the result.

Finally, we cannot rule out the presence of what we here choose to call “tactical Tories”. These are not necessarily the same as the “shy Tories” who historically have been long term embarrassed Conservative supporters, but rather people who genuinely did not like the Conservative Party but, however begrudgingly, “lent” them their vote in 2015 to avoid an unstable hung parliament that they had been told to fear. Understandably, particularly given the Conservative majority government that resulted, such people might be reluctant to later admit what they had done in the election.

That is not to say that such respondents necessarily lie to pollsters, but there is always a significant minority of those who refuse to disclose their voting intentions even whilst answering the rest of the survey. 45 respondents to our re-contact survey refused to say how they had voted, most of whom had also respondent refused or undecided in our pre-election survey. Based on the “soft refusal” system of weighting, these would have been allocated back a factor of their 2010 vote (if disclosed) in our final pre-election poll, but of course if the reason such respondents were now undecided or refusing to answer was because they were considering a tactical vote for a different party from usual, such a method would have been ineffective.

In general it is unlikely that supporters of all parties are equally likely to refuse to disclose their voting intentions. Had around half of the refusals in our recontact study been “tactical Tories” it would have explained all of the remaining discrepancy in the results. This is an area that requires further research, but if it can be established that supporters from certain parties are generally more likely to be refusing voting intentions, then either weighting, sampling, or question design will need to be adjusted in order to correct for the effects this will have on published voting intentions.

Going forward, Survation are looking to continue to improve our online polling methods as they relate to income, the participation of non-voters, the capturing and weighting of “refused” voters and the ways in which we ask our voting intention questions, prompting candidate names in our first question wherever possible and fair to do so.

The one point which is at least partly outside our control as pollsters, of course, is swing or turnout effects that manifest very late in a campaign, after fieldwork has been conducted. In general, where elections or referenda are important, pollsters should aim to complete their final polls as close to the final day as possible. However, pollsters are not think-tanks or academic institutions – we depend often on media clients to commission and then publish our findings.

Our final telephone poll was not commissioned by any client – it was Survation’s own idea, as with the Scottish independence referendum where we produced a telephone poll the day before the referendum as a check on our own online method, which was in the event published online by the Daily Record at 11pm on 17th September 2014. In the case of the general election, the result of our telephone poll were available too late on the Wednesday evening to carry in any print or broadcast media.

In general the lesson for those who conduct, report on and draw conclusions from polling is this: reporting of polls commissioned by the media who have deadlines for physical print and the restriction of reporting polls near polling day itself for broadcast clients given regulatory restrictions, makes the commissioning and publication of a late poll intended to capture a potential late swing very challenging. There are lessons here for the media in how they commission, report on and interpret polling, as well as lessons for pollsters themselves. These are all matters which the Inquiry will doubtless be looking into further and Survation will be offering our full cooperation to Professor Patrick Sturgis and the rest of the Inquiry panel in their investigation.

Note: A version of this report was presented by Damian Lyons Lowe to the first public meeting of the BPC/MRS Inquiry into the Performance of the Opinion Polls at the 2015 General Election on 19th June. Slides from the presentation can be seen here.

< Back