Twindex: Use with Caution

Survation’s Grace Philip explains why Journalists should be careful of using Twindex

On the 1st August, Twitter’s Political Index (or Twindex) launched a new service that aims to gauge the US American Presidential Election by measuring which electoral candidate is trending and “scores” them on a daily basis, producing results in an instant. By taking the 400 million tweets posted each day and analysing them, Twindex presents a whole new way to predict voting behaviour. Except, of course, for the fact that it doesn’t.

The Twindex results are calculated by a company called Topsy which takes the data from every Tweet in the world, extracts all the tweets in which Obama or Romney are mentioned, runs a sentiment analysis on them and then compares their findings to a preset neutral baseline. By taking three days worth of tweets each day, giving the older ones less of a weighting than the newer ones, it scores each candidate numerically: a negative score being 0 to 49, a neutral score being 50, and a positive rating being between 50 and 100. But while Twindex may be a good way to analyse tweets, it does not do to say that it is an effective method to measure public opinion.

Twindex technology attempts to analyse Twitter conversations in real time, arguably giving it the edge over traditional polling companies. “By illustrating instances when unprompted, natural conversation deviates from responses to specific survey questions,” says Adam Sharp, Twitter’s Head of Government News and Social Innovation, “the Twitter Political Index helps capture the nuances of public opinion.” But ultimately, Twindex is let down by its own assumption: that Twitter’s 140 million daily active users accurately represent populations because there are so many of them. But in truth, Twitter can only claim 15% of Americans that are online, with only half of them tweeting every day.

The people that do Tweet and form Twindex’s sample then represent a biased microcosm of society. According to Tom Rosentiel, from the Pew Research Centre and who spent a year studying conversations on social media, all Twitter is able to do is gauge the opinions of a younger and more politically engaged group of users. Unfortunately for Twindex, this gives an unfair weighting to one section of the population, thus creating a biased sample. “Once the sample is biased,” says Survation’s Chief Executive Damian Lyons Lowe, “the end results are never going to be a true representation of public opinion, even if you make the sample size larger. The science has already been left out and the damage is already done.”

A classic example of this phenomenon was during the US Presidential Election in 1936 when Literary Digest sent out ten million surveys questioning voting intention to readers of their own magazine, as well as registered telephone and car users. The magazine received almost 2.3 million completed surveys back and using their findings they concluded that Alfred Landon was ahead of Franklin Roosevelt by 57-43%. But Literary Digest failed to gather demographic information from their sample which was already ingrained with bias – the Digest’s poll could only claim to represent the opinions of an affluent demographic as only the wealthy class would have been on the records used. When no effort was made to weight their results, groups of lower incomes were not properly represented as a result. Meanwhile, George Gallup gathered voting intention from a much smaller sample (fifty thousand) that was properly weighted and so he was able to correctly predict Roosevelt’s landslide win.

While Twindex’s fundamental assumption creates an unrepresentative sample, Twindex also relies too heavily on the textual analysis of internet behaviour. Any predictions that stems from these methodologies must then be treated with caution, especially when they contradict with the findings of more traditional polling companies that use telephone and online methods to create representative samples.

One example was seen in 2010 when Robin Goad from Hitwise UK (now part of Experian) wrongly predicted the boy band One Direction would win the UK version of television singing contest, The X Factor, by using a similar methodology to Twindex. But come the final show, One Direction took a distant 3rd place while another contestant, Matt Cardle, not only won, but also received the most amount of votes each week. The methodology Goad used, which had correctly predicted the previous year’s winner, proved far from accurate. Survation however performed a properly weighted survey which took into account likelihood to vote, age and gender, and successfully predicted first, second third and fourth places in the competition.

When the results from Twindex and traditional pollsters conflict, it shows where one can learn from the other – or at least it does for Twitter’s Head of Government News and Social Innovation. “If the [Twindex] dials are pointing in different directions, people are saying one thing to pollsters, and another in conversation,” explains Sharp. Twindex then appears to access something that traditional polling can’t – un-prompted conversations in real-time. But Twindex does not make allowances for the fact that Tweets do not necessarily represent a person’s true opinions – people may be inclined to censor their tweets, appreciating the fact that they are essentially published comments and free for anyone to see.

Pollsters have already had to learn that people are prepared to say different things when their answer is not anonymous. The polls leading up to the 1992 UK general election predicted that there would be a hung parliament, with the Conservatives being around 1% behind Labour. The final results contradicted this with the Conservatives winning by over 7%. In response to the failed predictions, an inquiry was held by the Market Research Society who found that 2% of the 8.5% error could be explained by Conservative supporters refusing to disclose their voting intentions, meaning that people felt ashamed of their voting intention and therefore lied. Pollsters know the benefits of online and less personal random telephone surveys which remove face-to-face polling and thereby take away the pressure to answer in a particular way. In direct contrast, Twitter is inherently ‘exposing’, meaning that Tweets should not always be taking at face value.

Your “online” versus your “true” self

Not only are people more likely to lie on Twitter, but they are just as likely to exaggerate. “Overall, what we see is that people tweet when they’re irritated about something,” says Rosentiel, meaning that a Twitter user is more likely to Tweet when they feel strongly opposed to something. This could mean that an overly-emotional and therefore exaggerated opinion is expressed, rather than an opinion that has been considered and measured thoughtfully. It is not difficult to find examples in the news of when a Twitter user has gotten into trouble by sending these types of messages – one example is seen in the case of Paul Chambers who won his appeal after being charged for threatening to blow up an airport via Twitter when the judged appreciated that he had indeed been joking.

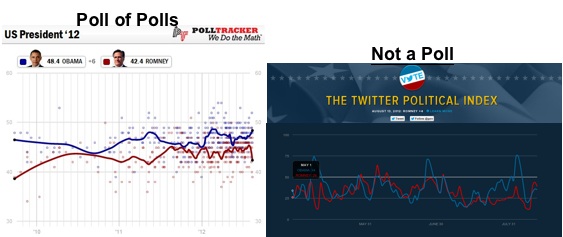

Spot the difference: positive vs negative correlation

Finally, looking at the actual results rather than methodology, another major problem is apparent. In most measures of public opinion or voting intention in a two candidate race, support for one candidate will fall as support for the other rises; their support will show strong negative correlation. After all, there are only so many votes to go round and they must divide them up between them. On Twindex, however, the support for both candidates seems to show fairly strong positive correlation; support for them both rises and falls together. This makes no sense in the context of actual political support, but is probably explained instead by some days having more ‘big news stories’ than others, thus leading to more tweets. The level of volatility of support also seems far higher than in normal polling, with both candidates regularly lurching from near 75 (strongly positive) to near 25 (strongly negative) in a matter of days, and then back again. In this way the results from Twindex tell us very little that seems reliable / valuable in terms of comparing changing support levels over time compared with actual polling.

Twindex is therefore not an accurate way to measure public opinion. Whenever Twindex does predict the right outcome, it is a lucky coincidence rather than having anything to do with the integrity of the methodology. And although Sharp has been reported saying that Twindex is not meant meant to replace traditional polling, journalists should still approach with caution – especially as Rosendial points out, “if Twitter were a good predictor of public attitudes, Ron Paul would be the Republican nominee. Not Mitt Romney.”

By Grace Philip, with contributions from Patrick Briône.

< Back